It’s live! Access exclusive 2026 AI live chat benchmarks & see how your team stacks up.

Unlock the insightsIt’s live! Access exclusive 2026 AI live chat benchmarks & see how your team stacks up.

Unlock the insights

New AI tools like OpenAI’s ChatGPT and Microsoft’s Bing chatbot have taken over the popular imagination. These tools use AI language models to generate responses to user queries that can sound eerily human. Because they’re powered by huge amounts of data and can write using standard conventions, the implications for every industry are huge.

Early stories on how this technology impacts higher education mostly focused on student cheating, but the dialogue has started to shift. Now, some experts are saying that as these technologies mature, they’ll become the “calculator for writing” by reducing “mindless, rote work”. While there are reasons to be excited about ChatGPT, we haven’t yet crossed the hurdles that will bring this technology to maturity. This leaves higher education with high potential balanced with equally high risk.

In this article, we’ll look at the top 10 dangers of ChatGPT in education right now.

As colleges and universities grapple with how to acknowledge or even encourage the use of this new technology, it’s creating issues with attribution in student work. For many, this is the most difficult dangers of ChatGPT in education. Many schools are leaving the regulation of these tools up to each instructor, meaning a wide variety of policies may be coexisting even within a single college or university department. Questions raised include:

Of course, each of these examples requires that a student is willing to disclose their use of AI writing in an assignment. This won’t always be the case, which leads to our next point.

The most immediate danger of ChatGPT in education and similar AI models concerns cheating. Although many schools are adapting policies that allow students to harness ChatGPT for their assignments, the point remains that students have already been using ChatGPT to cheat since it was released. In an example from Furman University, philosophy professor Darren Hick found that one student essay included “well-written misinformation.” This can be typical of AI writing in general, but unfortunately not everything produced by an AI system is so simple to detect.

One of the key dangers of ChatGPT in education is the spreading of false information. ChatGPT can produce content that has all the appearances of being correct – proper grammar, coherent sentences, plausibility – but it doesn’t actually understand if responses are accurate or based in fact. Going further, because information introduced to the AI model may not be current, responses may end up being completely incorrect. This all creates issues with ChatGPT’s ability to detect misinformation and can lead to the spread of false information. When students are assigned work to teach a new concept, this misinformation could undo much of what instructors are providing to students.

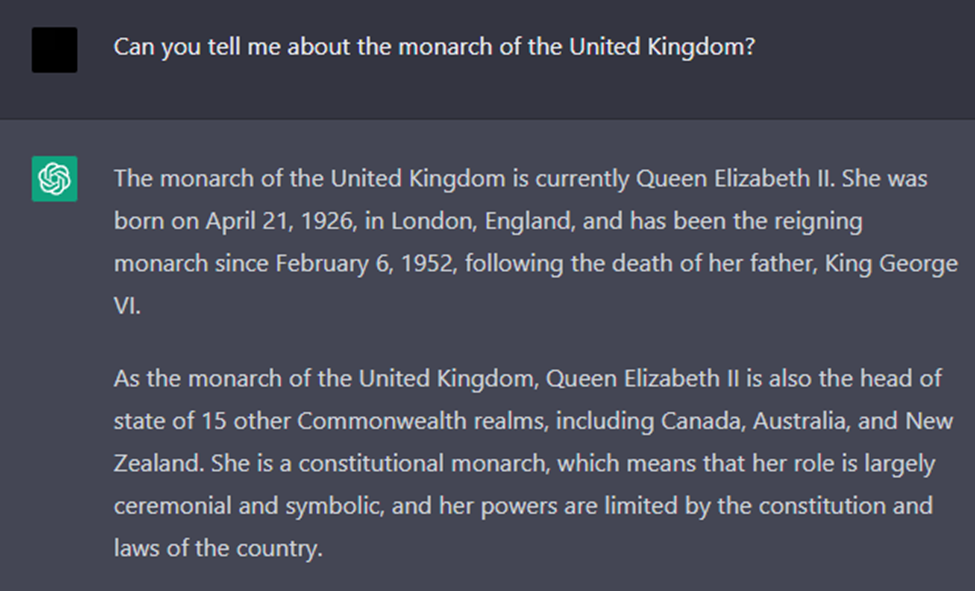

Here’s a simple example of outdated information, provided in February 2023

– 5 months after Charles III ascended the throne.

Another key danger of ChatGPT in education is bias responses. AI systems like ChatGPT share the biases of the information sets that they’re trained on. In computer science terms, this concept is often referred to as “garbage in, garbage out.” With massive datasets from large language models being used to train AI like ChatGPT, it’s impossible to verify that the data won’t contain some biases. This leads to ChatGPT perpetuating those same biases in its responses to users. As these technologies see increased use, that could mean increased discrimination and harm to entire groups of people. Schools must be careful not to appear to endorse any of these ideas from ChatGPT-generated content.

As a new technology, ChatGPT comes with privacy and ethical concerns that the public hasn’t yet come to terms with. Right now, that means there are unknowns around how data provided to ChatGPT is being collected and stored. Because higher education institutions must protect data privacy of students and staff, providing a detailed prompt to the AI or even using content written by ChatGPT could become problematic if that information might eventually be revealed to unauthorized third parties.

Because ChatGPT doesn’t understand its own responses, it can provide responses that would be inappropriate for an academic setting. For example, although ChatGPT may omit particularly offensive language, when asked to provide context about a piece of writing it may not differentiate between providing context and appearing to endorse outdated ideas or language. Difficult ideas belong in higher ed, but because ChatGPT is iterative and not transformative, any AI content written about those difficult ideas can appear to endorse the original rather than challenge them.

The privacy implications underlying ChatGPT mean that it may not be able to receive the kinds of data security certifications that higher ed should strive to maintain. In the European Union, General Data Protection Regulation (GDPR) regulates an individual’s control over their personal data. In the state of California in the US, the California Consumer Privacy Act (CCPA) empowers individuals to request that businesses disclose or delete data that is collected. While OpenAI might someday find a way to comply with these regulations, as of now there’s no method to request removal of data from the language model’s database.

A rising concern among those in higher education is the idea that today’s students are not yet ready for AI due to poor AI literacy. As much as schools work to create welcoming environments for students, at the end of the day, school will always come with stress, and especially so for younger students. Without carefully moderated responses, some of these students may be tricked into thinking the AI is sentient and gain a negative perception of self-worth based on cold AI responses. Because this technology isn’t going away, academics increasingly want to see proactive policies that place student mental health at the forefront.

In situations where students look to AI to improve on their writing or to simply find answers, ChatGPT and similar tools can often return poor writing with awkward phrasing. For students that look to these tools as they’re learning to write, this may eventually lead to developing poor writing habits. One example of this limitation is AI’s inability to handle idioms. Another is the tendency of AI produced content to be wordy while lacking real meaning. Altogether, these deficiencies in AI content can lead to writing that reads—understandably—unnaturally.

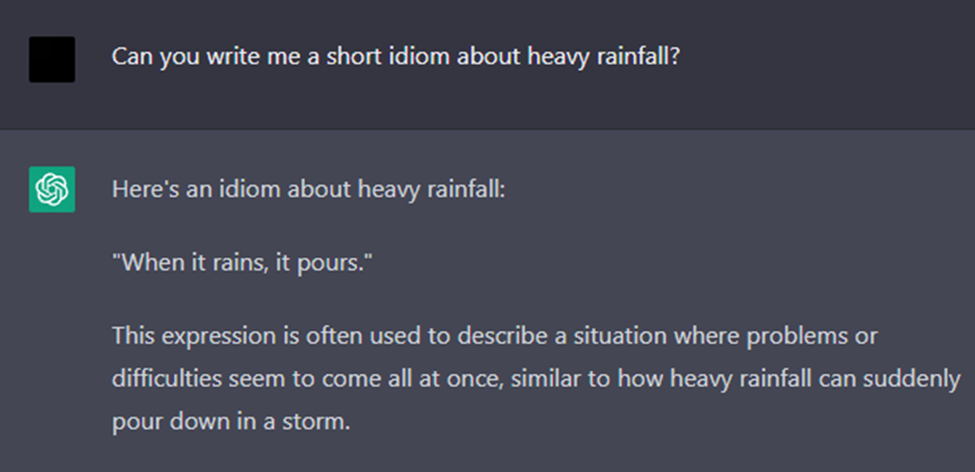

ChatGPT often can’t distinguish between the figurative or literal, and in this example

provides a literal response to a request for figurative language.

Since ChatGPT requires a vast amount of language to feed its responses, on one hand, it can be argued that AI tools will always need human input. On the other hand, because output from AI is entirely reliant on human art, these tools also lack the ability for original expression. This goes beyond debates around original art, and in higher education means that content produced using ChatGPT will lack all originality. What does higher education look like when students are trained to ignore original insights and reproduce only the ideas that have already been introduced to a large language model?

While ChatGPT is an incredible tool, the risks it introduces in higher education are numerous and worrying. There are many dangers of ChatGPT in education and so schools will need to weigh the good against the bad and may ultimately wonder if it’s worth the risk.

Thankfully, the risks inherent in ChatGPT are not found in all AI tools. By training AI on curated data, schools can leverage their existing content and knowledge bases without taking on the dangers of ChatGPT in education that we’ve seen here. To find out more about introducing an AI chatbot to your school or organization, take a look at Comm100 Chatbot – an AI bot built for higher ed.